TechSource Systems is MathWorks Authorised Reseller and Training Partner

Learn how to make an estimation of where the object will be at the next time step based on some assumed physical motion model of the object using MATLAB

An autonomous system primarily consists of four main components: perception, localization, planning and control. Sensor Fusion and Tracking toolboxTM focuses on perception, which starts with a fused position estimation of object from previous time step, then make an estimation of where the object will be at the next time step based on some assumed physical motion model of the object. Some of the common problems that are tackled in sensor fusion and tracking systems such as “How can I design trackers and localization algorithm?”.

Attend our 1-day course that provides hands-on experience with developing and testing localization and tracking algorithms. Examples and exercises demonstrate the use of appropriate MATLAB® and Sensor Fusion and Tracking Toolbox™ functionality. Topics include:

The course is intended for engineers, scientists, and researchers who wants to create multi-object trackers to fuse information from multiple sensors using Sensor Fusion and Tracking Toolbox.

MATLAB Fundamentals or equivalent experience using MATLAB; basic knowledge of tracking concepts

Upon the completion of the course, the participants will be able to:

TechSource Systems is MathWorks Authorised Reseller and Training Partner

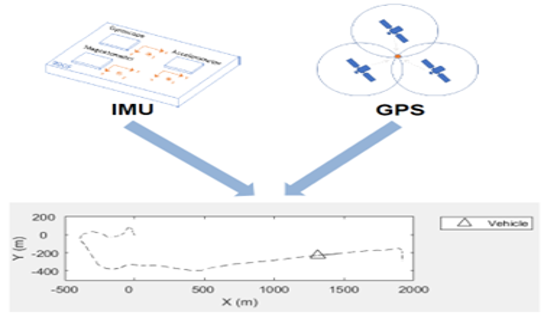

Objective: Fuse IMU and GPS sensor data to estimate position and orientation.

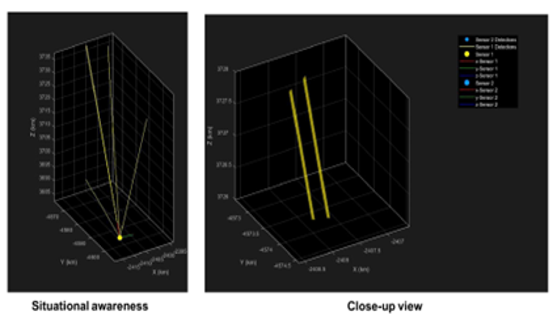

Objective: Import and process detections or generate scenarios used in multi-object trackers.

Objective: Select and tune filters and motion models based on tracking requirements.

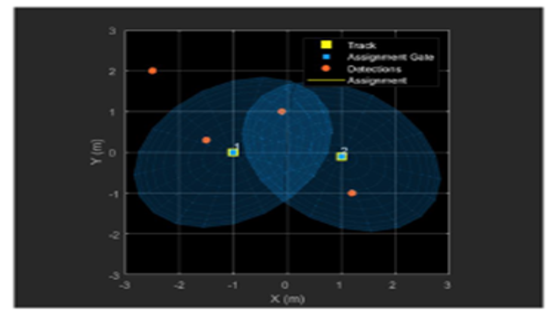

Objective: Determine the appropriate data association method for different tracking situations.

Objective: Create multi-object trackers to fuse information from multiple sensors such as vision, radar, and lidar.